Week 1: the gap is inside your company

I committed to sharing something every day in March about our AI transformation at Pactum. The pace is high, not just internally but in the entire world, so there's a lot that happens and a lot to share. This is the week 1 recap, pulling together what I've learned across the posts, the meetings, and the late nights.

We rolled out Claude Code to 160 people

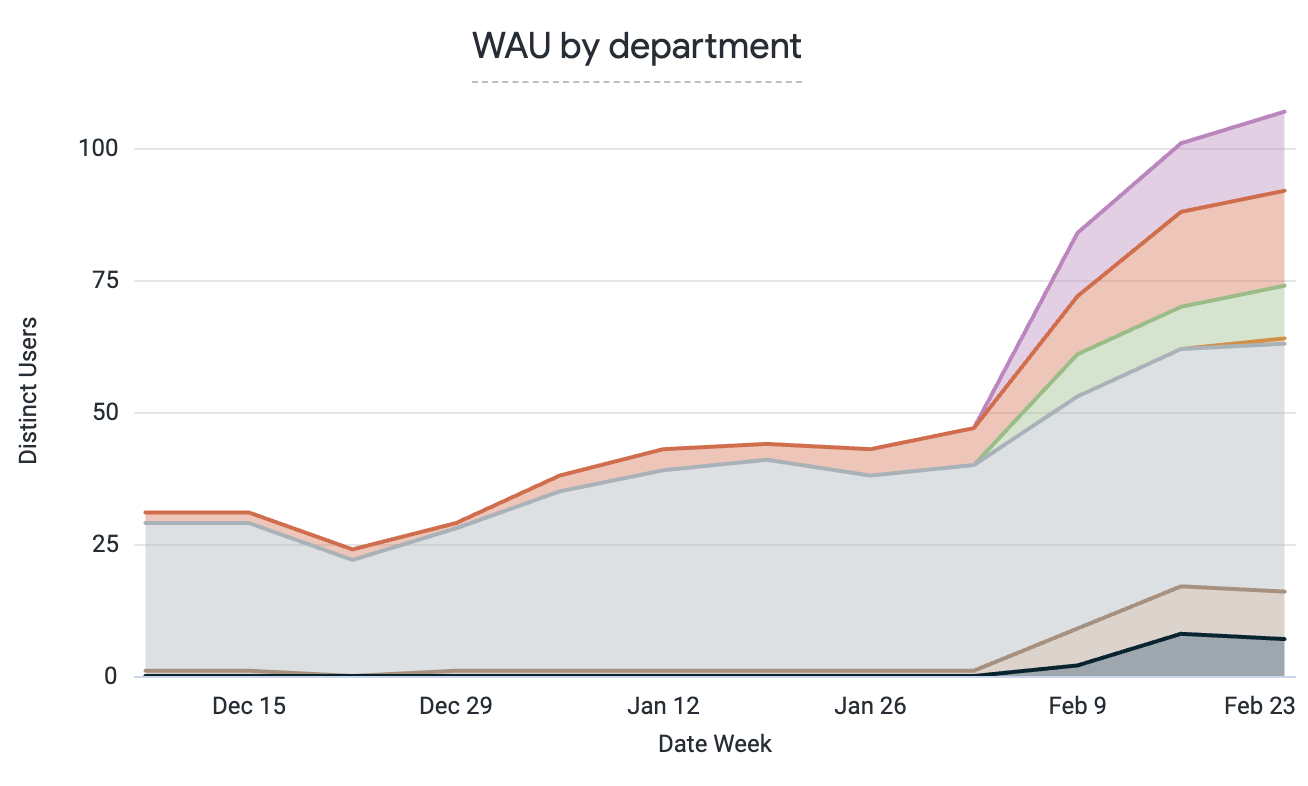

A few weeks ago, zero non-engineers at Pactum used AI agents for daily work. Today, 52% do.

We rolled out Claude Code to the entire company: 160 people distributed across 11 countries. And they are not using it for small things. Customer-facing folks are able to do 2x more meetings thanks to faster meeting prep, and account managers are saving 10+ hours each on reviewing and following up on missed actions. Our People team has saved days already by automating repetitive contract updates. And a PM saved herself 3 days of work by having Claude review 3 months' worth of development tickets and putting together 31 test scripts for structured QA.

What I didn't appreciate before is how much repetitive work still exists in modern organizations. Simple workflows where LLMs have even read-only access to a couple systems of record can create massive time savings.

What it actually took

There is so much hands-on tactical work that goes into making this a success. The strategic level is easy compared to the tactical one. I've spent the last several weeks as an IC, as a support engineer, as an enterprise architect, as a coach. And it mostly came at the cost of my sleep.

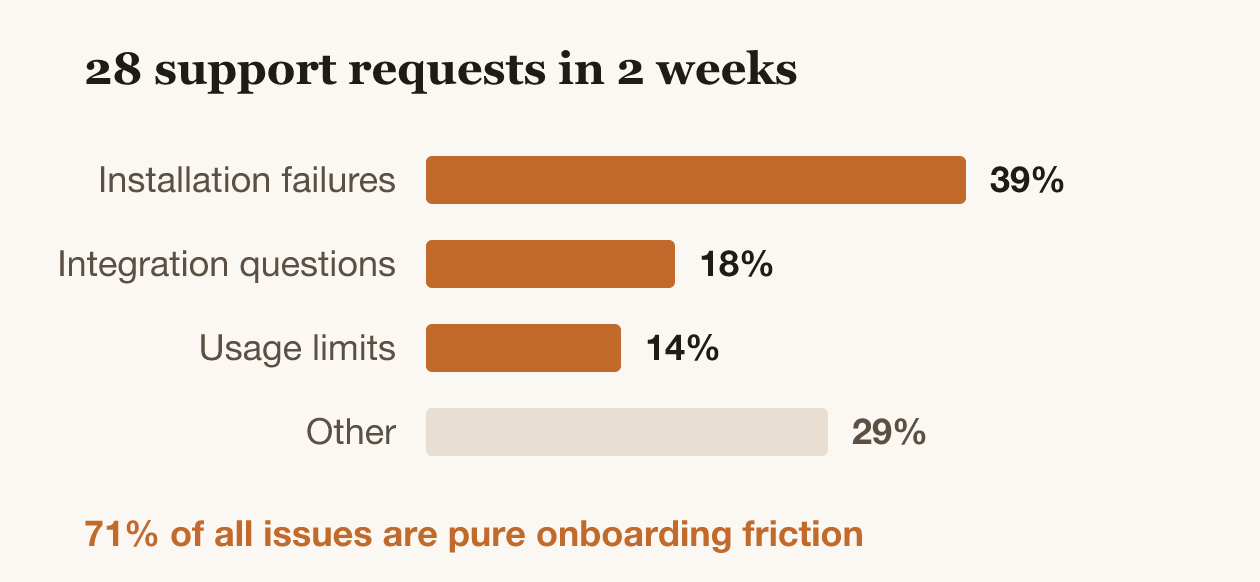

As we rolled out Claude, the #1 support issue wasn't "how do I prompt" or "what should I use AI for." It was brew conflicts on managed Macs. In 28 support requests in two weeks: 39% were installation failures, 18% were integration questions ("can Claude connect to X?"), 14% were usage limits. 71% of all issues are pure onboarding friction.

The same tool, two completely different experiences

On Thursday evening I ran a session with the revenue leadership team. That single meeting captured the whole range.

A VP on the call typed one sentence into Claude: "Tell me everything I need to know about the (specific customer) opportunity and next steps." In seconds, he got a full brief: timeline, risk analysis, key contacts, all pulled from Salesforce notes and Gong calls. He didn't even ask it to break things down by category, but it did. "Man, I wish I had this a year ago managing the team," he said. "This is game-changing."

In the same meeting, another leader spent 20 minutes unable to get past a Salesforce sandbox login issue.

That gap is the actual challenge of enterprise AI rollout. It's not between companies that adopt AI and companies that don't. It's inside your own company, between the person who's flying and the person who's stuck on a login screen.

Dealing with the front-end, the early adopters, is easy. Dealing with the back-end, the early majority and laggards, takes so much work. I think the solution is not even trying to make everyone a power user, but rather just solving those things for them.

Learning when to trust it

In that same meeting, one of the leaders asked a question I keep hearing: "Where can I trust Claude vs. not?"

People's first instinct is to just believe it. You're wowed by what it comes up with, so you expect it to have god-like properties. It knows so much more than I do, it must be all-powerful, it must be better than me on every margin. But in reality it can easily miss context. It might not know things that are not written down. It might not choose to look up some bit of information that is actually critical context.

It is actually a skill that you need to build up, when to trust an agent and when not. Similar to how googling used to be a skill 10 or 20 years ago. There was a huge difference between someone who was able to use Google effectively to get almost any answer quickly, versus people who typed entire questions into Google and then ended up nowhere. Google has improved so much by this point that it matters less. But Claude is definitely not there yet.

The infrastructure that makes it work

One thing you expect from a fellow employee is shared context. As long as they didn't start a month ago, you expect them to understand how the company operates, key people, product, culture, etc.

An AI agent is like a bright university graduate who has knowledge of everything at the average level of the internet. But it does not have any understanding of your particular company, your organization, your product, your codebase.

And agents don't remember. Every time you start a new session (which you do tens of times a day), all of that knowledge walks out the door. Since solving this, I use agents about 10x more in my actual work at Pactum. The barrier to entry was too high. Every session started with me copy-pasting links, looking up context, re-explaining things.

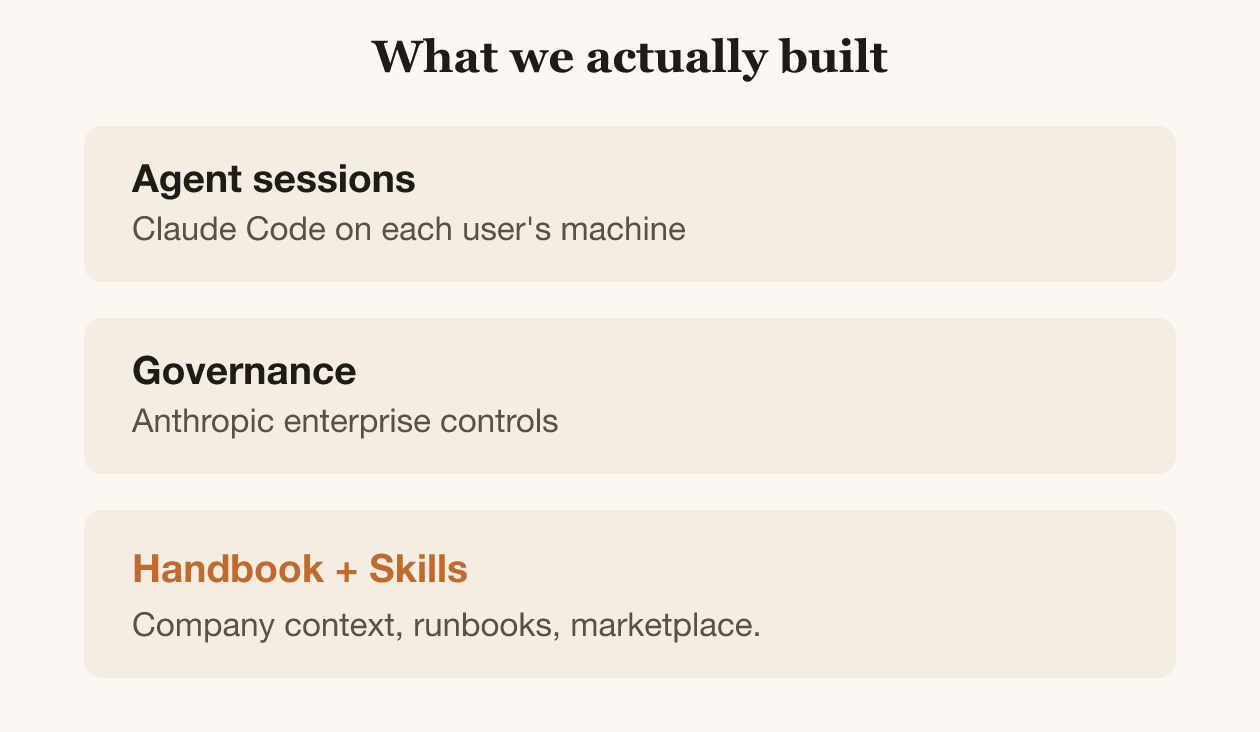

We solved it with a company handbook for agents. A simple index of links to your most important sources, accessible to every agent session automatically. At Pactum, we built this as a Markdown-based wiki. Most entries are a link to an original document and a brief summary. The agent reads the index, follows links, and pulls in what it needs.

Most companies are still thinking about this wrong

From what I've heard, most companies are thinking of AI agents as engineering tools, not general productivity tools. My LinkedIn posts reflect it: most reactions come from people building things, not from people using Claude for their daily work.

At Pactum, AI agents, even ones meant for coding like Claude Code, are general purpose tools. It might not be true out of the box; it requires work.

What I'd do differently

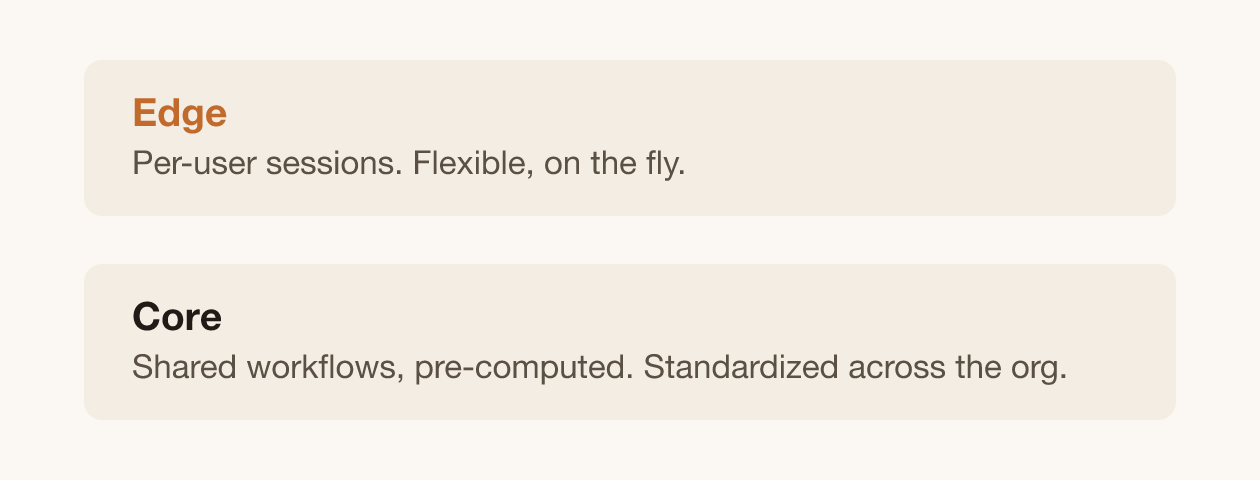

I took the approach of doing this fully bottom-up, and I've learned a lot about what works. I went all-in on everything being done by Claude on the user's computer, on the edge. The benefit is that you learn fast what people actually need through bottom-up experimentation.

But if I were starting again, I'd be quick to standardize those workflows in parallel. One pattern explains why: agents repeat the same work across the company. Summarizing a customer's state across Salesforce, product analytics, usage data — every agent pulls together the same picture from scratch, every time. Martin Kosk, our enterprise architect, proposed a useful frame for this: core vs. edge. Edge is the end-user agent session, where everything is computed on the fly. Core is a set of recurring workflows (also agent-driven) that pre-compute and cache information for every edge session to reuse.

The parallel to data warehousing is obvious. These agents are doing ETL on the fly. The "core" starts looking like a data pipeline with better natural-language interfaces on top.

If you standardize the repeatable workflows centrally, you get cost savings, but you also get something more important: you don't need to teach everyone how to use Claude.

Less than four weeks in, we aren't decelerating. I'm already thinking past adoption into a different question: how do you design information flows, responsibilities, and processes when agents are doing a growing share of the actual work?

See you next week while I continue wrestling with unglamorous and bog-standard internal IT issues.